|

|

|

|

|

|

| University of Southern California |

|

|

| [Paper] [Code] |

|

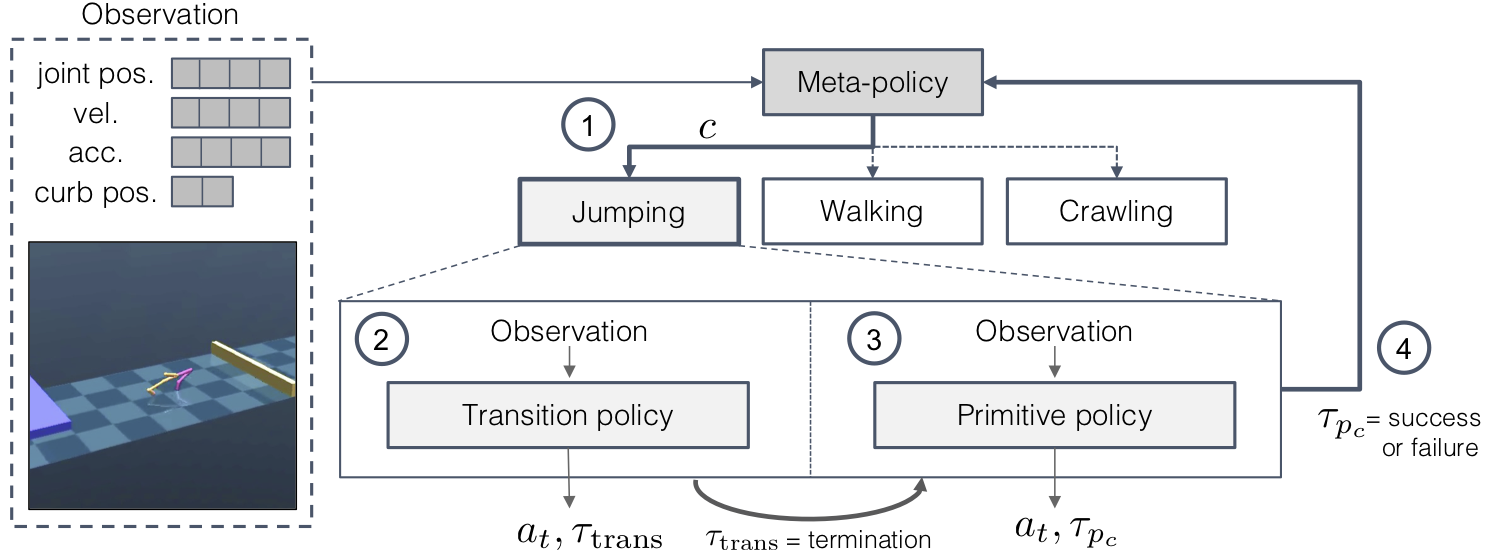

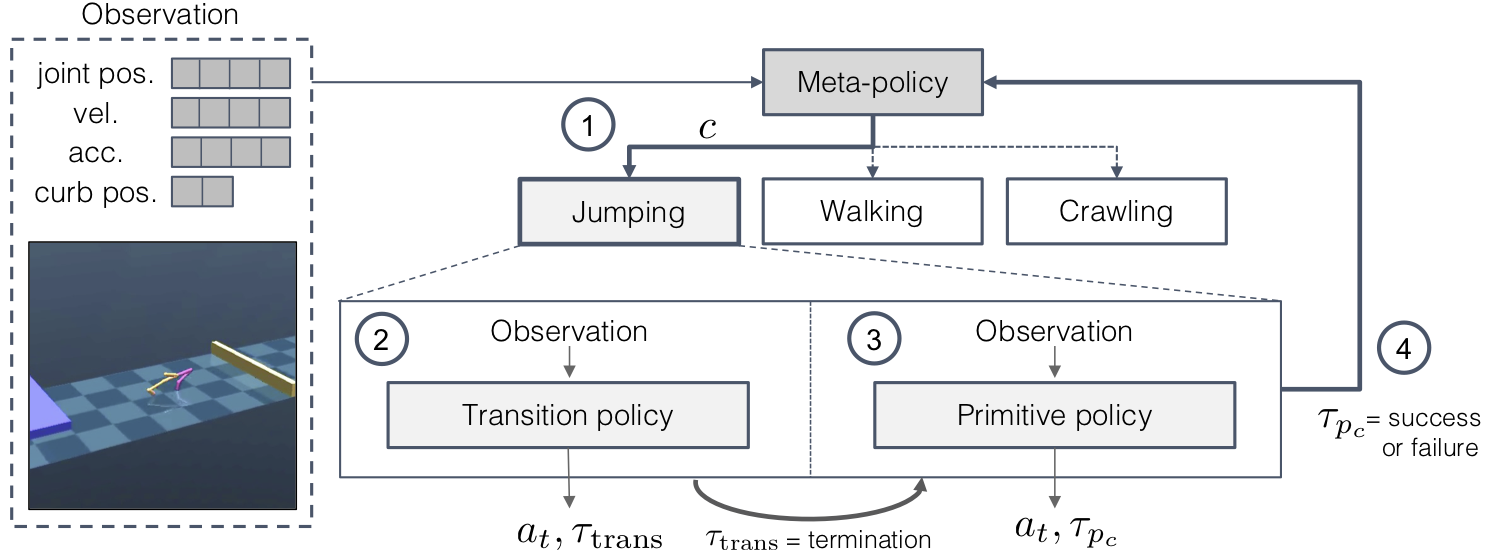

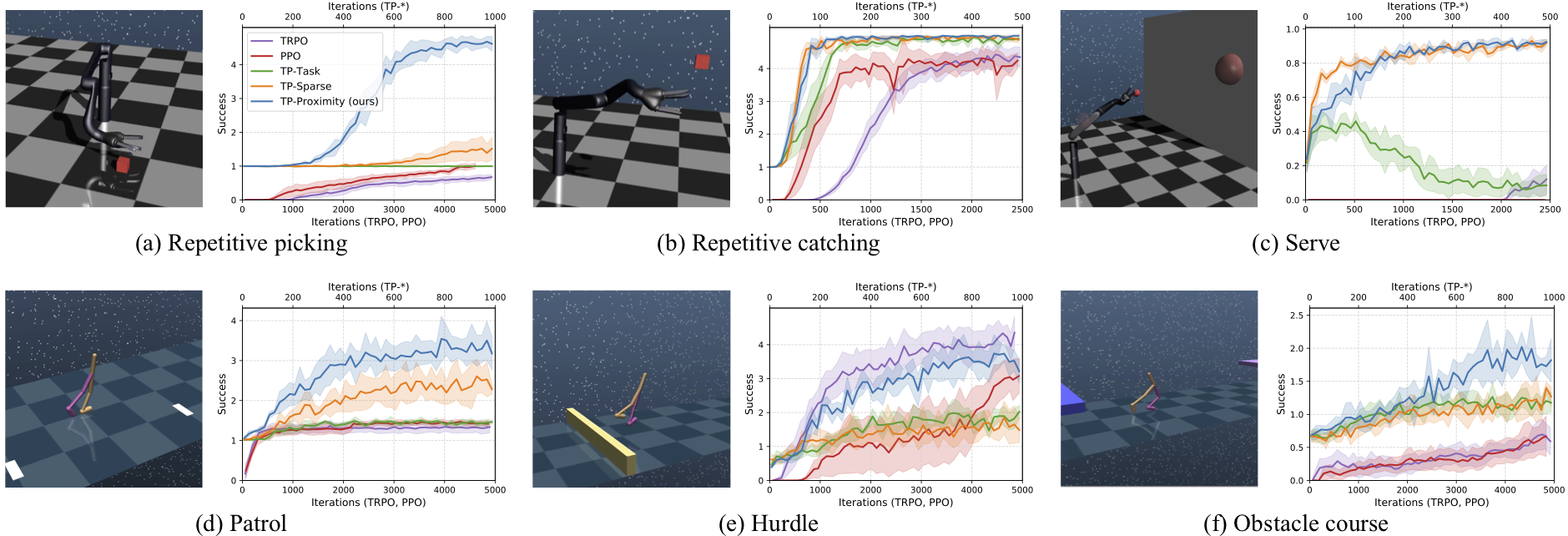

This tough environment requires the agent to walk, jump and crawl its way to success.

Inspired by tennis, this task is composed of tossing and hitting a ball to a target.

Similar to a guard patrol, the agent must walk forwards and backwards repeatedly.

|

| Website design: BAIR paper websites |